February 12, 2022

Joe Francis, founder of Brillder, reflects on the impact of the multiple choice question on EdTech.

“Multiple choice” sounds good – a gleaming superstore with thirty types of jam will always trump a Soviet era queue for a jar of pickled cabbage. Yet the truth for EdTech is that while multiple choice suits machines down to the ground, it’s not especially flexible or educational.

Alongside its uglier little cousin, the true/false binary, the MCQ dominates our industry. And while numerous platforms promote the agile might of AI these days, the reality is that machines can’t handle the most important educational question of all – the open question.

Douglas Adams got this right in The Hitchiker’s Guide to the Galaxy, where the hyper-advanced super-computer Deep Thought takes 7.5 million years to deduce that the meaning of life is 42. But the question needn’t be so profound to non-plus computers. Try, How would you improve the British political system? Could the First World War have been avoided? Does Hamlet love Ophelia? How does landscape influence farming?

Alexa won’t have a clue.

In the UK, most A-level subjects (anything requiring an essay) ask open questions all the time. Facts and evidence play a huge part in good answers, but assessment is more subtle than mere “right” and “wrong”. The skills of argument and interpretation – very much higher-level skills – are what should matter.

Except, often they don’t. In the UK, we have Charles Dickens’ caricature of the utilitarian educator, Mr Gradgrind of Hard Times, who declared “Now, what I want is, Facts. Teach these boys and girls nothing but Facts. Facts alone are wanted in life.” Gradgrind would certainly have condoned an education which could be assessed by MCQ. But, in fact, the Victorians did not have them. Multiple choice was a 20th century American invention.

In the US, an S.A.T score above 1500 will still get you an interview for Harvard. The history of the S.A.T. is closely connected to the history of both multiple choice and EdTech, and begins with Frederick Kelly, who sought to devise the most objective possible format for assessment. During WW1 he soon had the US Army using questions like these in order (worryingly) to identify officer material:

RECOMMENDED: https://global-edtech.com/category/edtech-market/

The question is stupid, but the format is genius. The College Board, responsible for overseeing transition from High School to University, quickly adopted it and the S.A.T. was born. However, it brought a challenge: MCQs could be marked relatively quickly (and by markers) without any specialist subject knowledge, but only by human hand. Marking was so dull, in fact, that humans still made mistakes.

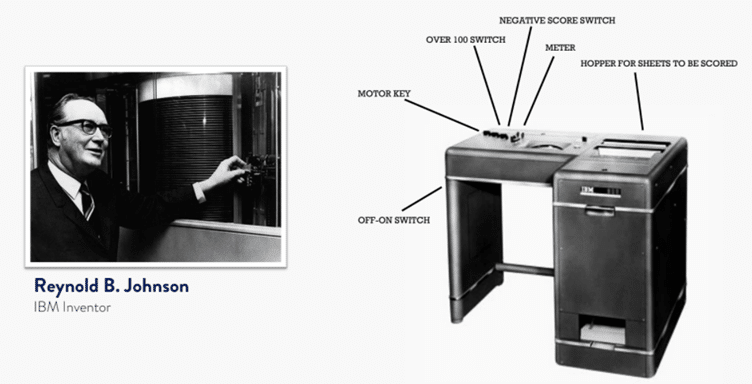

Then, in the early 1930s, the first EdTech was born – a mechanised marking technology (not, significantly) a mechanised learning technology. Reynolds Johnson, a brilliant science teacher from Michigan who went on to invent the hard drive, created his “scoring apparatus”. IBM were so impressed they hired him and dubbed it the IBM 805. Like modern EdTech, it was all about scalability. It accepted tests on an industrial scale into its “hopper” and churned through them one thousand times faster than a human being could.

Thus the MCQ fits the requirements of tech, but not really of education. As with so much in our Tech Age, we have to ask whether we adapt to technology or it adapts to us. After all, multiple choice does not really reflect how we talk or think: “Would you [a] marry me, [b] not marry me [c] think about it, or [d] co-habit indefinitely?” is not likely to elicit the desired answer. Nor does the MCQ necessarily test what we really know (the French for ‘nut allergy’, for example) so much as invite us to take an educated guess between [a] allergie aux noix, [b] haine des nuits, [c] noisettisme, [d] nutelleur. Your first instinct is to eliminate the obviously silly, after which you wheedle out what you think you know from what you suspect to be untrue. If that doesn’t work, you can always ask the audience, phone a friend et cetera.

At Brillder, yes, we sometimes ask MCQs, but we have to be careful because we are trying to cater to sixth-formers whose studies require significant nuance and sophistication. We mix MCQs with other question types – pair match, hierarchy, sequence, word and line highlighting and more. Nothing technologically miraculous – the most important aspect of an interactive ‘test-to-teach’ method like ours is that every question is prefaced by, or contains, ideas and hints which help inform the answer. This requires skilled human authorship. So far, I am pleased to say, university educated humans far exceed the capabilities of computers. We also preface each of our learning units with an open question – even the STEM ones.

I am going to leave you with a question which is certainly ‘closed’ but which draws upon intuition alongside academic knowledge. The aim is not to interrogate the learner but to have them apply what they know to solve a puzzle – here, partly with the help of images.

It’s certainly multiple, but it’s also layered with different scoring outcomes for different decisions. Touchscreens help: the pleasure of moving things about as opposed to ticking a box allows learning to extend beyond mere selection and elimination.

But the quality of education depends in large part on the quality of questions we ask and answer. Multiple Choice will always be with us, but we mustn’t allow the limitations it imposes to shape the education we deliver.

New to EdTech? Read our EdTech 101 guide: https://global-edtech.com/edtech-definitions-products-and-trends/